Tutorial 2: Statistical Hypothesis Testing

January 20, 2026

The principles of statistical hypothesis testing: generating evidence-based conclusions without complete biological knowledge!CRITICAL: Regular practice with R and RStudio, the statistical software used in BIOL 322 and introduced during tutorial sessions, and consistent engagement with tutorial exercises are essential for developing strong skills in Biostatistics.

REPORTS/EXERCISES: Tutorial reports are independent exercises and are not submitted for grading; however, students are strongly encouraged to complete them. Some tutorials include solutions at the end to support self-assessment and review. Other tutorials do not require model answers because they are procedural and can be easily self-assessed by verifying that the code runs correctly and produces the expected type of output.

General setup for the tutorial

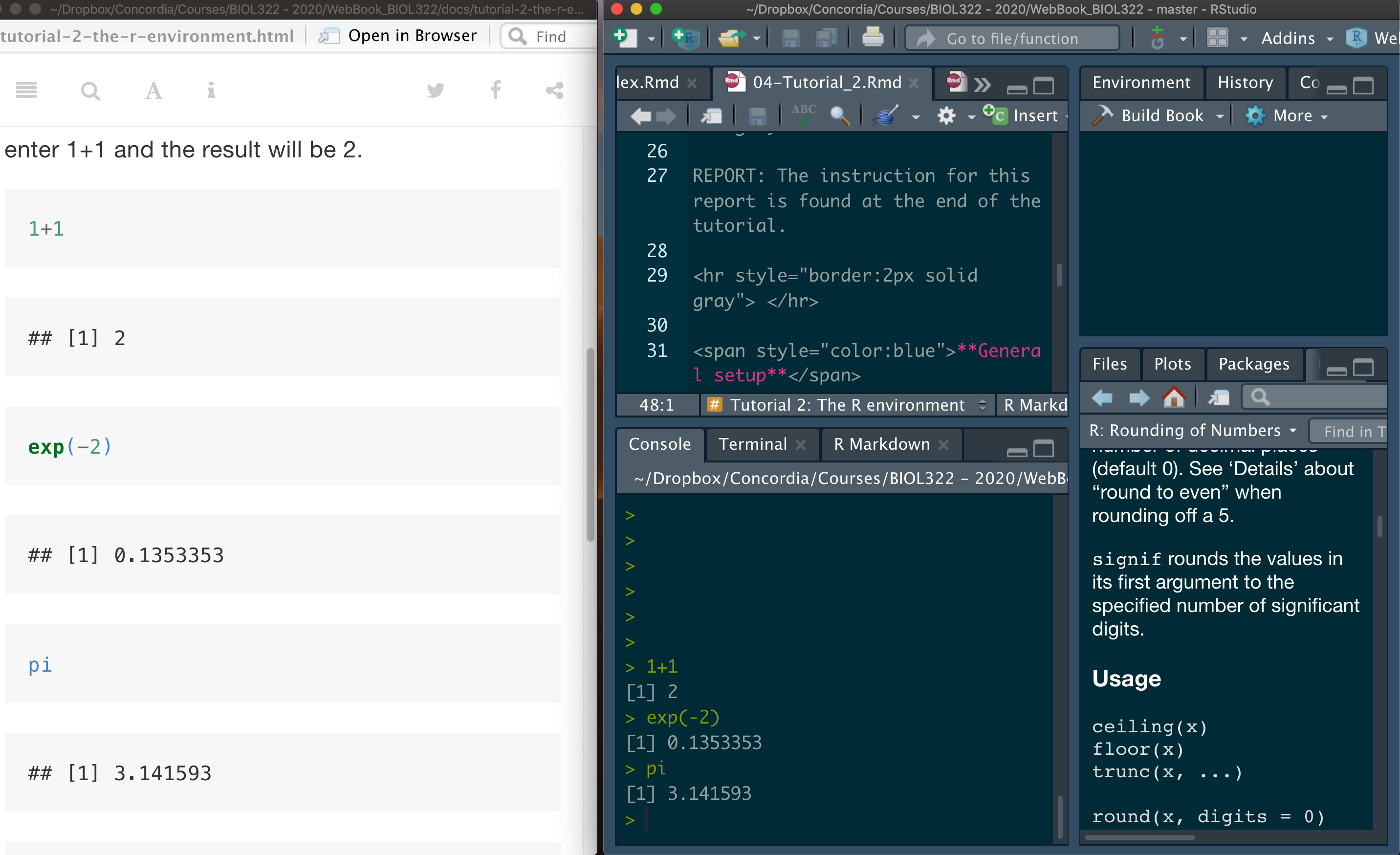

A good way to work through is to have the tutorial opened in our WebBook and RStudio opened beside each other.

Now that you are ready, let’s start our tutorial. READ! READ! and READ! Don’t simply copy and paste code.

As we discussed in Lecture 2, statistical hypothesis testing is an intimate stranger!! Most users of statistics know how to implement and interpret hypothesis tests, but many do not fully understand how they work, including their underlying logic, subtleties, and philosophy. This tutorial is designed to help you develop this deeper and much-needed understanding.

Statistical hypothesis testing is the most used statistical framework to generate evidence for or against questions based on data (research questions or otherwise).

Remember as discussed in lecture 2:

The statistical hypothesis testing framework is a quantitative method of statistical inference that allows us to generate evidence for or against a research hypothesis. However, practitioners of statistics are often confused about what this actually means: statistical hypothesis testing is commonly said to generate evidence against (but not for) the null hypothesis, and it does not formally generate evidence for or against the alternative hypothesis.

The principles of statistical hypothesis testing using a simple problem: Do toads exhibit handedness?

The right hand of toads (as seen in class, but a small summary is provided here)

From Whitlock and Schluter: “Humans are predominantly right handed. Do other animals exhibit handedness as well?” Bisazza et al. (1996) tested this possibility on the common toad. They sampled (randomly) 18 toads from the wild. They wrapped a balloon around each individual’s head and recorded which forelimb each toad used to try to remove the balloon. Do other animals exhibit handedness as well?”

First step: transform the research question into a statistical question

Statistically, the question above can be translated into: “Do right-handed and left-handed toads occur with equal frequency in the toad population, or is one type more frequent than the other?”

Result of the study: 14 toads were right handed and four were left handed. Are these results evidence of handedness in toads?

The statistical hypothesis framework for inference is then used to pose the following question:

Do these results provide evidence to say that the sample value we obtained is consistent or inconsistent with the samples from a “theoretical” population of toads where right- and left-handed individuals are equal in proportion?

If inconsistent, then the data would have generated evidence that other animals exhibit handedness.

Parts of this tutorial were built based on Whitlock and Schluter’s code (https://rpubs.com/mdlama/153914), but much more detailed explanations and interpretations were added to improve conceptual understanding and clarify the underlying statistical logic related to frequentist statistical hypothesis testing.

Since we sampled 18 toads, we must use this same sample size to generate the sampling distribution for a theoretical population in which right- and left-handed toads are equally likely. Remember that different sample sizes lead to different amounts of sampling variation — with small samples we expect more random variability, whereas larger samples tend to give more stable and precise results.

Let’s start by learning how to take a random sample from a categorical variable having two possible outcomes from a theoretical population of known probabilities (here, 50%/50% chance of each hand) with the desired sample size (i.e., 18 individuals).

The function below will simulate the following “manual” implementation:

put millions of small pieces of papers into a bag where half are written “Left” and the other half “Right”. In practice we only need 36 pieces so by chance alone zero “Left” and 18 “Right” or any combination would be possible.

Sample with replacement 18 pieces of paper from the bad. Replacement means that you will take one piece of the paper, write down whether you got a “L” or “R” and then put back in the bag before taking another piece of paper. Replacement here is critical because otherwise you would change the original theoretical population while sampling it. If you were to keep the piece of paper (i.e., sampling without replacement), you would reduce the probability of sampling “Ls” and “Rs”. For example, imagine that your first 3 values were “Ls” and that you did not replace them. Then, you increased the probablity of sampling an “R”. Remember one of the criterion for random sampling “The selection of observational units in the population (e.g., individual piece of paper here) must be independent, i.e., the selection of any unit (e.g., L or R) of the population must not influence the selection of any other unit.”

The function below is pretty self explanatory. But some details perhaps are useful. c("L", "R") sets the categories that we want; we could have written “Left” and “Right” but “L” and “R” makes the code look “cleaner”. If three categories were required, we could had set them as c("A", "B","C"). size is the sample size. prob is the probability of each category. Let’s say we had 3 categories with 33.33% each as probability. Then prob = c(0.3333, 0.3333,0.333). Lastly replace = TRUE states (for the purpose of the problem here) that each observation (“toad”) has the same probability of being sample.

Sample1 <- sample(c("L", "R"), size = 18, prob = c(0.5, 0.5), replace = TRUE)

Sample1We can then calculate how many individuals in sample 1 were of a particular category (here we decided right-handed); you will later that selecting the left-handed wouldn’t make a difference as we know how many there are:

sum(Sample1 == "R")Let’s take a second sample and calculate its sum:

Sample2 <- sample(c("L", "R"), size = 18, prob = c(0.5, 0.5), replace = TRUE)

Sample2

sum(Sample2 == "R")Most likely Sample1 and Sample2 have different numbers of right-handed individuals.

Now, let’s generate a huge number of samples from the theoretical population (i.e., computer approximation of the infinite sampling process done via calculus) and for each of them keep the score of right-handed toads:

number.samples <- 100000

samples.n18 <- replicate(number.samples,sample(c("L", "R"), size = 18, prob = c(0.5, 0.5), replace = TRUE))

dim(samples.n18)Let’s print the first 3 samples:

samples.n18[,1:3]Let’s now calculate for each sample, the number of individuals that are right-handed:

results.n18 <- apply(samples.n18,MARGIN=2,FUN=function(x) sum(x == "R"))The number of left-handed toads can be calculated simply as:

18-results.n18Take a look into what the vector results.n18. Each value in the vector results.n18 represents the number of right-handed toads from a random sample of 18 individuals from a theoretical population in which the number of right- and left-handed toads are the same. Remember the function head lists the first 6 values in a vector or matrix:

head(results.n18)Let’s build the frequency distribution table for the samples (i.e., sampling distribution of rigt-handed toads from the theoretical population):

Table.TheoreticalPop <- table(results.n18, dnn = "numberRightToads")

Table.TheoreticalPopObviously the number of samples in Table.TheoreticalPop is 100000:

sum(Table.TheoreticalPop)We can also calculate the relative frequency of samples of different number of right-handed toads as:

Table.TheoreticalPop/100000As you might expect, the most common outcome is samples of 18 toads containing 9 right-handed and 9 left-handed individuals. However, many samples differ from this value purely by chance, illustrating sampling variation around the theoretical expectation of a 50%/50% split between right- and left-handed toads. To make the table to look a bit better use the function data.frame:

data.frame(Table.TheoreticalPop)We can also calculate the probability of each class (i.e., for each number of individuals that are right-handed) as follows:

Table.TheoreticalPop/100000Because we are dealing with a discrete variable (the number of right-handed toads), a histogram would group these discrete values into bins. Instead, we use a barplot based on the table we generated for the theoretical population so that each distinct value appears separately:

barplot(height = Table.TheoreticalPop, space = 0, las = 1, cex.names = 0.8, col = "firebrick", xlab = "Number of right-handed toads", ylab = "Frequency")The distribution is symmetric but note that the relative frequency 8 left- handed toads may not be exactly the same as 10-right handed toads; and the same is the case for the relative frequencies of 2-right handed and 16-right handed toads as we did not generate the distribution for infinite number of samples; note, however, that they are pretty similar!

Now, let’s go back to our original data (i.e., real sample) in which 14 toads were right-handed. What is the the probability of obtaining a sample as extreme or more extreme than 14 toads

frac14orMore <- sum(results.n18 >= 14)/number.samples

frac14orMoreGiven that the sampling distribution is symmetric, the value above is pretty similar to:

frac4orLess <- sum(results.n18 <= 4)/number.samples

frac4orLessIf infinite samples were taken, then frac14orMore and frac4orLess would had been the same. That’s the reason we concentrated in one limb (right) because the counts for left-handed toads can be easily calculated by subtraction.

Given that the interest here is not to generate evidence that the dominant limb of toads is their right (or left) limb but rather generate evidence as to whether they have a dominant limb (either right or left), we adopt a probability that reflects both sides of the sampling distribution from the theoretical population:

2*frac14orMoreThis probability can be equally estimated by:

frac4orLess+frac14orMoreNote that the difference between the two values above are due to the fact that our distribution was computanionally generated. The one generated by infinite sampling would had given exactly the same value, i.e., frac4orLess+frac14orMore would had been exactly the same as 2*frac14orMore.

The computational approach developed here helps you understand the logic behind how statistical evidence about a scientific hypothesis is generated. In real applications, however, we would typically use a method that derives the sampling distribution based on an infinite number of possible samples (referred to as an exact test) using calculus and not a computation approach. The corresponding probability can be calculated as follows. The first value is the number of right-handed toads (or left-handed), 14; the second value is the total sample size, 18; and the third value is the proportion assumed under H_0, namely 50%/50% (or 0.5):

binom.test(14,18,0.5,alternative="two.sided")$p.value The probability is 0.03088379, which is pretty close to our computational approach. In my case, 2 x frac14orMore was equal to 0.03194.

Just for sake of completion, let’s estimate the p-value based on the number of left-legged frogs, i.e., 4 individuals:

binom.test(4,18,0.5,alternative="two.sided")$p.value Obviously the same p-value is produced!

Regardless of the approach (infinite or computational), the probability of finding a sample of 18 toads (from a theoretical population in which the number of right- and left-handed individuals are equal) in which 14 are right-handed and 4 are left-handed (hence calculating the probabilities from both sides of the curve) is around 0.03. This probability tell us how unusual is to find a sample like the one found by the study (i.e., 14 right-handed and 4 left-handed) in a theoretical population. The probability is quite small (0.03111), thus providing evidence to say that the toads in the real data exhibit handedness. In other words, the probability of finding a sample value with 14 toads as right-handed (4 left-handed) is quite implausible (inconsistent) with what is expected from a theoretical population with half of the individuals being right-handed and the other half being left-handed!

As such, our original sample of 14 right-handed toads and 4 left-handed toads seem to come from a population different from our theoretical population of 50% right-handed and 50% left-handed. We then use this probability to generalize the results and state that “the study generated evidence that toads present handedness.

Now, let’s put this into an estimation perspective. The estimate of the proportion of right-legged frogs over left-handed frogs based on the sample is obviously:

14/18 = 0.7777778Let’s remember what a confidence interval is: “A confidence interval is a range of values surrounding the sample estimate that is likely to contain the population parameter.” If you need a refresher on this concept, please go to the WebBook for BIOL322 (access available in Moodle).

The 95% confidence interval for the estimated proportion above (i.e., 0.7777778) is also given by the function binomial.

binom.test(14,18,0.5,alternative="two.sided")As you can see, the 95% confidence interval is 0.5236272 - 0.9359080. As you can imagine, this is quite wide but qualitative agree (and will always do) with the results from the statistical hypothesis testing. Here, based on an alpha of 0.05 (the complement of a confidence interval of 95%) and p-value = 0.03088, we rejected the null hypothesis. Note (obviously) that the confidence interval DOES NOT contain the uninteresting value assumed under the null hypothesis 50%. Now let’s estimate the confidence interval for the proportion of left-legged over right-legged:

binom.test(4,18,0.5,alternative="two.sided")The proportion is 22.22%, or 0.2222 and the confidence interval of 0.06409205 - 0.47637277 (as expected) also does not contain the value assumed under H0 of 0.5 (50%).

Now let’s estimate the confidence interval around the value assumed under the null hypothesis, i.e,, 50%/50% or 0.5; which means 9 individuals left-legged and 9 individuals right-legged:

binom.test(9,18,0.5,alternative="two.sided")The confidence interval is 0.2601906 - 0.7398094. Note that the interval does not include the observed proportion 0.78 (i.e., 14/18). So,

1) the confidence interval for the observed value does not include the value under the null hypothesis (0.50); and

2) the observed confidence interval for the value assumed under the null hypothesis does not include the observed value (0.78)Note, however, that the fact that they agree, that doesn’t mean that parameter estimation is not important and that we should aim at improving it (and its confidence interval) when possible. By always just aiming at statistical hypothesis testing and not parameter estimation, we restrain and become content with just a qualitative approach (reject or don’t reject the null hypothesis) instead of a quantitative one.

A critical note on sample size and its effect on statistical hypothesis testing and inference

By improving estimation, (and its confidence interval), we can improve quantitative characterization of a research problem. One way to improve estimation is to increase sample size, which may be extremely timely and financially consuming, and even ethically irresponsible (e.g., collecting and manipulating too many individuals that can put biological populations and species at risk). Let’s assume, for instance, that 1000 frogs were sampled instead but the number of right-legged were the same in proportion (i.e., 14/18 = 778/1000)

binom.test(778,1000,0.5,alternative="two.sided")The 95% confidence interval (0.7509431 - 0.8034091) for 1000 individuals is much more informative than the original based on 18 individuals (0.5236272 - 0.9359080).

We could had used the computational approach to estimate confidence intervals (and/or p-values) for any desired proportions and sample sizes; this also demonstrates that the sampling apprach we use to create null distributions are the same as for estimating confidence intervals. For that, we need to change the expectation of right- and left-legged toads as observed.

We used here the command set.seed below so that the random number generator starts at the same point for all of us and we obtain the same results.

set.seed(100)

number.samples <- 20000

n <- 1000

samples <- replicate(number.samples,sample(c("L", "R"), size = n, prob = c(1-0.778,0.778), replace = TRUE))

results <- apply(samples,MARGIN=2,FUN=function(x) sum(x == "R"))

proportions <- results/n

quantile(proportions, probs = c(0.025, 0.975))The estimated 95% (two-tailed, hence 0.025 and 0.975 above) confidence interval using the computation approach is 0.752-0.804. Note that this confidence interval extremelly similar to the one obtained using the binomial distribution (via the binom.test function), which was 0.7509431 - 0.8034091. If it were possible to set the number of samples for the computational approach as infinite, then the two intervals would had been the same.

We use this sort of simulations so that you can gain a better understanding of what statistical distributions are. Also, most distributions have closed-form solutions, i.e., they can be solved using a formula that has been built to generate probabilities and confidence intervals (among other types of statistical inferential tools).

Taken together, the goal here was to demonstrate that there is no real difference between them (statistical hypothesis testing) in their calculations. But there is a big differece in philosophy, aims and information.

Finally, for the sake of discussion, let’s just assume that the proportion found was different, say 1 left-legged and 17 right-legged toads:

binom.test(1,18,0.5,alternative="two.sided")The p-value is obviously much smaller (0.000145) and the confidence interval much smaller, providing greater confidence about the sample estimate (0.001405556 - 0.272943600); and also agrees with the rejection of the null hypothesis, i.e., does not include the value assumed under H0 of 0.5!

As we saw it, the qualitative answer from the statistical hypothesis testing will always agree with the quantitative answer based on the confidence interval estimate. Why do we often use statistical hypothesis then? There are also two other important issues to consider:

We love yes & no answers; much easier to make decisions (but there are issues involved as discussed in Lecture 3.

Some estimates of effects are difficult to interpret on an easy and straightforward quantititave value. Let’s assume an ANOVA design for estimating the effect of four different temperature levels (low, intermediate, high and very high) on fish growth.

The data are transformed into an F-value to summarize all the information into one value. We can certainly estimate the confidence interval for the F-statistic. But that’s often difficult to translate quantitatively. So, researchers resort to an easier, value, i.e., a p-value for the F-value to arrive to a qualitative conclusion. That said, we should also consider always in reporting the confidence interval for each sample mean as they are indeed more informative than statistical hypothesis testing.

We hope that this tutorial assisted in gaining a better understanding of the elements we covered in lectures 2 and 3. The paper found in the link below, “Interpreting Research Findings With Confidence Interval”, is also very helpful to understand the points made here:

Selected exercise

All the information and code needed for you to solve this problem is in this tutorial.

The problem is based on the Ideal Free Distribution (IFD; Fretwell & Lucas 1970), as described in Wikipedia (https://en.wikipedia.org/wiki/Ideal_free_distribution). The IFD is an ecological theory that predicts that individuals of a species will distribute themselves among resource patches in proportion to the amount of resources available in each patch. For example, if patch A contains twice as many resources as patch B, then twice as many individuals should forage in patch A compared to patch B. Under this theory, such a distribution minimizes resource competition and maximizes individual fitness.

Suppose you conduct an experiment to test the Ideal Free Distribution (IFD) in a species of grasshoppers. You establish two connected patches (two tanks linked by a tube) and provide each tank with 50% of the total food supply. You initially place 10 grasshoppers in each tank. After six hours, you return to count the number of individuals in each tank (allowing for the possibility that grasshoppers may have moved between them). At that time, you find 3 grasshoppers in tank 1 and, consequently, 17 in tank 2 (assuming no individuals died during the experiment).

Do these results provide evidence for or against the Ideal Free Distribution?

Develop code to estimate whether the results are consistent or not with the IDF. Code should include both the computational and the exact test.

What would be your answer on the basis of the code and the concepts you have learned above?

Reference: Fretwell, S. D. & Lucas, H. L., Jr. 1970. On territorial behavior and other factors influencing habitat distribution in birds. I. Theoretical Development. Acta Biotheoretica 19: 16–36.

Answer

Set number of samples for the computational approach:

number.samples <- 100000 # (could be more but 100000 is quite enough to generate the null distribution computationally) Generate sampling distribution under H0 (computationally). You can call the variables anything, including the labels (Tank1, Tank_1, or something else) for the tanks:

samples.n20 <- replicate(

number.samples,

sample(c("Tank1", "Tank2"), size = 20, prob = c(0.5, 0.5), replace = TRUE)

)

dim(samples.n20)Create frequency distribution under H0. You can sum either Tank 1 or Tank 2 - results will be the same because they are expected to be 50%/50% each.

results.n20 <- apply(samples.n20, MARGIN = 2, FUN = function(x) sum(x == "Tank1"))

head(results.n20)

Table.TheoreticalPop <- table(results.n20, dnn = "number Ind Aquarium 1") # absolute values

Table.TheoreticalPop/number.samples # relative values, i.e., probabilities

Graphing the probability distribution:

barplot(height = Table.TheoreticalPop/number.samples,

space = 0,

las = 1,

cex.names = 0.8,

col = "firebrick",

xlab = "Number of grasshoppers in Tank 1",

ylab = "Probability")What is the probability of getting 3 or fewer in Tank 1, or 17 or more, if H0 is true?

frac3orLess <- sum(results.n20 <= 3)/number.samples

frac17orMore <- sum(results.n20 >= 17)/number.samplesTotal two-sided probability:

frac3orLess+frac17orMoreYou should obtain a value around 0.002–0.003 (small differences depend on random variation due to the fact that the computation approach is based on a “limited” number of samples and not infinite number of samples).

Exact test:

binom.test(3,20,0.5,alternative="two.sided")$p.value the probability should be around 0.0002 - very similar to the computation approach

Confidence interval for the observed proportion in Tank 1:

binom.test(3, 20, 0.5, alternative = "two.sided") # 3 out of 20 grasshoppers, H0 = 0.5You should get something like: 95% CI ≈ [0.03207, 0.3789], noting that 0.5 is not in the confidence interval; and consistent with the p-value being smaller than 0.05 (confidence level or alpha). This interval represents the range of plausible values for the true proportion of grasshoppers that would choose Tank 1 in the long run. Note that 0.5 is not contained in this interval, which is consistent with the p-value being much smaller than 0.05.

Confidence interval for the observed proportion in Tank 2:

binom.test(17, 20, 0.5, alternative = "two.sided") # 17 out of 20 grasshoppers, H0 = 0.5You should get something like: 95% CI ≈ [0.6210, 0.9679], noting that 0.5 is not in the confidence interval; and consistent with the p-value being smaller than 0.05 (confidence level or alpha). This interval now represents the range of plausible values for the true proportion of grasshoppers that would choose Tank 2. Again, 0.5 is not contained in the interval, which is consistent with the same small p-value obtained before.

Do these results provide evidence for or against the Ideal Free Distribution?

“The observed distribution of grasshoppers (3 vs. 17) is highly inconsistent with the Ideal Free Distribution; therefore, the experiment provides strong evidence against the IFD in this case (p ≈ 0.003).”