Tutorial 8: The t-distribution

The t distribution and its use in statistical hypothesis testing

Week of October 31, 2022 (8th week of classes)

How the tutorials work

The DEADLINE for your report is always at the end of the tutorial.

The INSTRUCTIONS for this report is found at the end of the tutorial.

Students may be able after a while to complete their reports without attending the synchronous lab/tutorial sessions. That said, we highly recommend that you attend the tutorials as your TA is not responsible for assisting you with lab reports outside of the lab synchronous sections.

The REPORT INSTRUCTIONS (what you need to do to get the marks for this report) is found at the end of this tutorial.

Your TAs

Section 101 (Wednesday): 10:15-13:00 - John Williams (j.p.w@outlook.com)

Section 102 (Wednesday): 13:15-16:00 - Hammed Akande (hammed.akande@mail.mcgill.ca)

Section 103 (Friday): 10:15-13:00 - Michael Paulauskas (michael.paulauskas@mail.mcgill.ca)

Section 104 (Friday): 13:15-16:00 - Alexandra Engler (alexandra.engler@hotmail.fr)

General Information

Please read all the text; don’t skip anything. Tutorials are based on R exercises that are intended to make you practice running statistical analyses and interpret statistical analyses and results.

Note: Once you get used to R, things get much easier. Try to remember to:

- set the working directory

- create a RStudio file

- enter commands as you work through the tutorial

- save the file (from time to time to avoid loosing it) and

- run the commands in the file to make sure they work.

- if you want to comment the code, make sure to start text with: # e.g., # this code is to calculate…

General setup for the tutorial

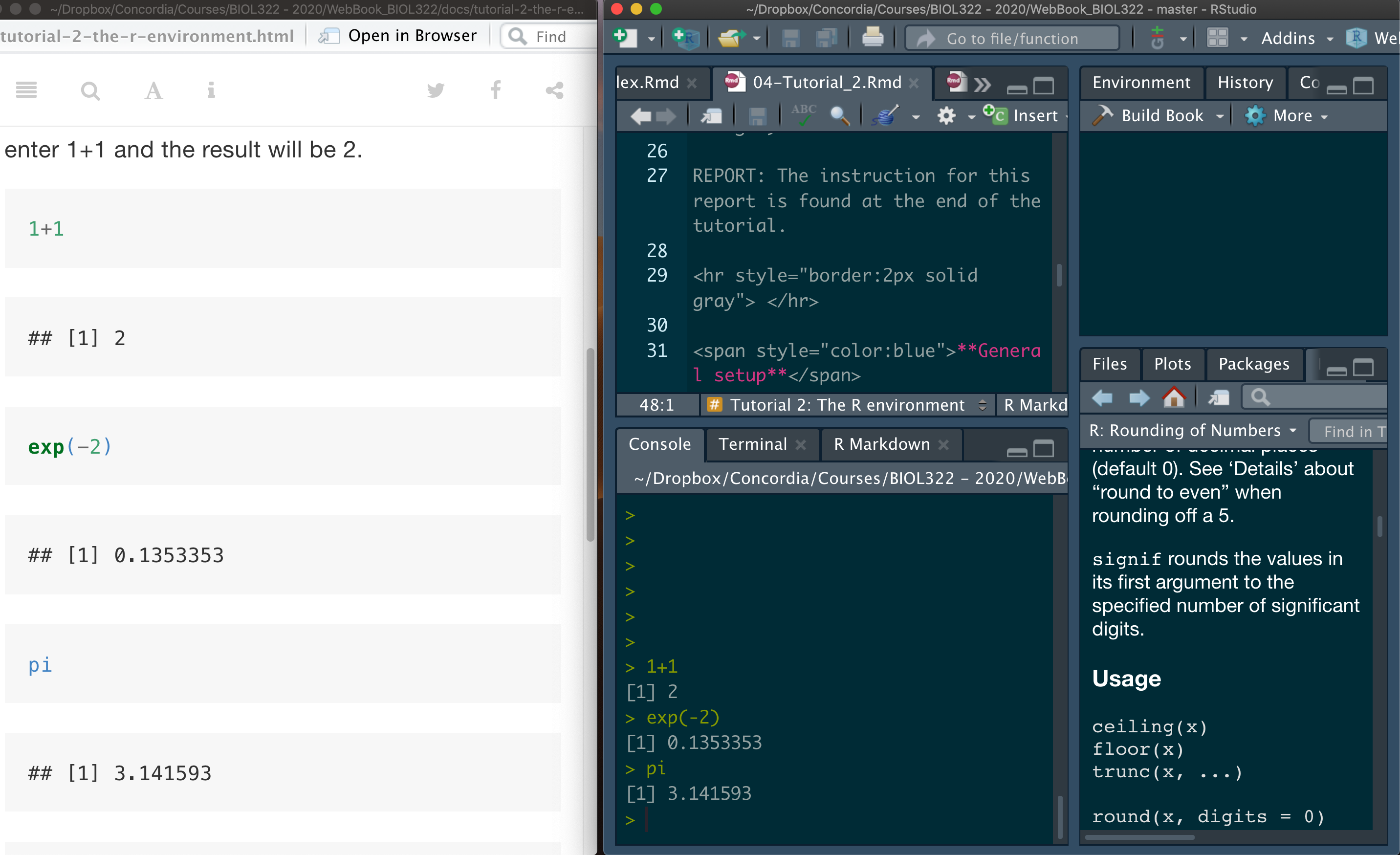

A good way to work through is to have the tutorial opened in our WebBook and RStudio opened beside each other.

A computational approach to understand how the t distribution was conceptualized more than 100 years ago:

Let’s assume a normally distributed statistical population: mean (mu) = 98.6 and standard deviation (sigma) = 5.

Here we will build the sampling distribution of means for this population using “finite sampling”. In other words, we will use a computational process, as we did in other tutorials, to approximate the sampling distribution by:

a) generating a large number of samples from the population;

b) calculating the mean and standard deviation for each sample mean; and

c) building the frequency distribution of the standardized sample means (i.e., the sampling distribution of standardized values). We will understand the standardization process a bit later.

The sampling distribution of standardized sample mean values is t-distributed (i.e., it follows the t-distribution). Using the t-distribution, we can calculate a p-value for the observed t-value from the data as the number of values equal or greater than the observed and equal or smaller than -observed (minus observed) in the t distribution. The process can be summarized as follows (as seen in class):

The t distribution was built using calculus based on infinite sampling. However, a finite approximation (via computer) allows us to understand the intuition that was used to build the t distribution. The t distribution will be built based on a sample size (n) equal to 25 observations (i.e., number of humans for which their temperatures was measured). Be patient, it will take just a little while (probably a bit more than a minute!); here we will use 1000000 replicates (the number approximates much better the t-distribution than our usual 100000 replicates; we need more samples to computationally approximate the t-distribution with the required precision). Remember that the real t-distribution was built using calculus to model the distribution under infinite sampling.

number.samples <- 1000000

samples.pop.1 <- replicate(number.samples,rnorm(n=25,mean=98.6,sd=5))

dim(samples.pop.1)Calculate the mean and standard deviation of the sampling distribution (this will also take a little bit of time given the large number of samples):

sampleMeans.Pop1 <- apply(samples.pop.1,MARGIN=2,FUN=mean)

sampleSDs.Pop1 <- apply(samples.pop.1,MARGIN=2,FUN=sd)As you know by now, the mean of the sampling distribution of means is equal to the mean of the population (mu = 98.6). They are not exactly the same because we used finite sampling; but for all purposes the computation and analytical (calculus based) are grounded on the same concept, i.e., developing sampling distribution for the theoretical population of interest, i.e., under the null hypothesis:

c(mean(sampleMeans.Pop1),98.6)Next, we calculate the standard deviation of the sampling distribution of means as sd(sampleMeans.Pop1), which is called standard error of the mean; this quantity (standard error) is equal to the standard deviation of the population (sigma = 5) divided by the square root of the sample size (n=25). Note that they are very close as well:

c(sd(sampleMeans.Pop1),5/sqrt(25))Because the t-distribution needs to be made universal, we need to standardize the t-distribution. This is because the distribution needs to be made universal so that it can be applied for any continuous variable that not only changes in population mean and standard deviation, but it also changes in measuring units as well (cm, kg, etc). We do that by first subtracting the sampling distribution of means by its mean (i.e., the population mean). In this way, the sampling distribution of means (t-distribution) has now a mean of zero. Next we divide the subtracted values by its respective sample standard errors. The subtraction and division are done as follows:

standardized.SampDist.Pop1 <- (sampleMeans.Pop1-98.6)/(sampleSDs.Pop1/sqrt(25))We could have also standardized by the mean of sample means since they are pretty much the same, i.e., the population mean equals the mean of all sample means:

standardized.SampDist.Pop1 <- (sampleMeans.Pop1-mean(sampleMeans.Pop1))/(sampleSDs.Pop1/sqrt(25))Let’s calculate the mean and standard deviations of these standardized sampling distribution of means:

c(mean(standardized.SampDist.Pop1),sd(standardized.SampDist.Pop1))As you can see, the mean for the standardized sampling distribution is very similar to zero but we would have needed in about 1000000000 to become make it literally zero; but this would had taken too long in regular laptops.

The standard deviation should be similar to sqrt(v/(v-2)) or sqrt(df/(df-2)) where df are the degrees of freedom, i.e., df=25-1=24:

sqrt(24/(24-2))Note how similar the value above is to sd(standardized.SampDist.Pop1). As such the standard deviation of the standardized t-distribution changes as a function sample size (i.e., degrees of freedom) but not as a function of the mean value, which (as we have seen above) is always zero. But note that the standard deviation of the standardized t-distribution is always sqrt(df/(df-2)) regardless of the standard deviation assumed for the normal distribution that was used to generate it. This is because the standardization process involved in generating the standardized t-distribution makes it equal always to sqrt(df/(df-2)).

Let’s verify the above:

number.samples <- 1000000

samples.pop.2 <- replicate(number.samples,rnorm(n=25,mean=98.6,sd=8.5)) # instead of 5 as used earlier

sampleMeans.Pop2 <- apply(samples.pop.2,MARGIN=2,FUN=mean)

sampleSDs.Pop2 <- apply(samples.pop.2,MARGIN=2,FUN=sd)

standardized.SampDist.Pop2 <- (sampleMeans.Pop2-98.6)/(sampleSDs.Pop2/sqrt(25))

c(sd(standardized.SampDist.Pop2),sqrt(24/(24-2)))See! Even though we changed the normal population used to generate the standardized t-distribution (i.e., instead of a standard deviation of 5 as for population 1, we used a standard deviation of 8.5), the standard deviation of the standardized t-distribution is still sqrt(df/(df-2)).

Let’s plot the sampling distribution (zoom it so that you can see it properly); the argument prob=TRUE in function hist indicates that the frequencies in the distribution are transformed into probabilities when plotting the histogram. Remember (as seen in lecture 13) that we are using a continuous variable (i.e., temperature) and, as such, we use probabilities instead of frequencies:

hist(standardized.SampDist.Pop1, prob = TRUE, xlab = "standardized mean values",ylab = "probability", main="",xlim=c(-4,4))Now, let’s plot the t distribution on top of the histogram; we will plot the t distribution based on 24 degrees of freedom (remember that we used n = 25 and that we lost 1 degree of freedom (df = n-1) because we used the standard deviation to standardize each sample in the sampling distribution). The t distribution is plotted using the function curve associated with the function dt (which calculates the probability density distribution (pdf as discussed in class) for the t distribution with the appropriate sample size). Note that our computer approximation of the t distribution is pretty much the same as the t distribution built using calculus based on infinite sampling:

hist(standardized.SampDist.Pop1, prob=TRUE, xlab = "standardized mean values",ylab = "probability", main="",xlim=c(-4,4),ylim=c(0,0.4))

curve(dt(x,df=25-1), xlim=c(-4,4),col="darkblue", lwd=2, add=TRUE, yaxt="n")Using the t-distribution for statistical hypothesis testing

We will use here the example we covered in class regarding the human temperature. State the null hypotheses:

\(H_0\) (null hypothesis): the mean human body temperature is 98.6°F.

\(H_A\) (alternative hypothesis): the true population is different from 98.6°F.

Here we will decide whether we should reject or not-reject the null hypothesis \(H_0\) by calculating the probability (or p-value) of finding the observed temperature, or a more extreme temperature, assuming that the null hypothesis (\(H_0\)) of the study question (\(\mu\)=98.6\(^o\)F) is true.

We will start by using the standard calculus-based distribution of the t distribution to calculate the p-value for our data. Later, we’ll contrast this probability with our computer based approximation. Here we will compare the human body temperature in a random sample of 25 people with the “expected” temperature 98.6°F taught at schools.

Download the spending data file

Now, lets upload and inspect the data:

heat <- as.matrix(read.csv("chap11e3Temperature.csv"))

mean(heat)

View(heat)Let’s calculate the t statistic (also called t score, or t deviate) as seen in class. This is simply the standardized value of our observed sample mean so that it becomes comparable to the standardized t distribution. We subtract the observed sample mean by the population value (assumed as true to build the distribution reflecting the null hypothesis Ho of interest) divided by the standard error of the sampling distribution. This is exactly what we did to build computationally the t distribution. What we do know is to transform our own sample so that it’s comparable to that distribution:

t.value.temperature <- (mean(heat)-98.6)/(sd(heat)/sqrt(25))

t.value.temperatureWhat is the probability of obtaining a sample mean as extreme or more extreme (i.e., smaller since the sample mean is smaller than the target population) than 98.524°F given that the population mean is 98.6°F?

Remember that the mean of the t distribution (sampling distribution of standardized means) is zero and the t score for 98.524\(^o\)F (sample mean based on 25 people) is t = -0.5606452.

So the question becomes: “What is the probability of obtaining t scores as extreme or more extreme than -0.5606452 assuming that the population mean (when standardized) is truly zero (i.e., 98.6°F)? Remember, 98.524°F is t = -0.5606452 (i.e., when t standardized) AND 98.6°F (unstandardized) is zero (t standardized). This probability can be calculated using the function pt (pt stands for probability of t):

Prob.t.value.temperature <- pt(-0.5606452,df=24)

Prob.t.value.temperatureThe probability is 0.2901181. Given that the interest here is not to generate evidence that the human body temperature is smaller than the one we learn in textbooks but rather generate evidence as to whether it differs (smaller or greater) or not from the temperature learned in textbooks. In this case, we adopt a probability that reflects both sides of the t distribution to generate evidence as to whether the observed sample t value has a mean (-0.5606452) that is consistent with the means in the sampling distribution of t values that reflect the null hypothesis, i.e., zero (or 98.6°F in the original sampling distribution of sample means before standardization)

Prob.t.value.temperature.bothSides <- 2*Prob.t.value.temperature

Prob.t.value.temperature.bothSidesThis probability (p-value = 0.5802363) represents the relative amount of values equal or greater than 0.5606452 and equal or smaller than -0.5606452. This can be represented graphically as:

hist(standardized.SampDist.Pop1, prob=TRUE, xlab = "standardized mean values",ylab = "probability", main="",xlim=c(-4,4),ylim=c(0,0.4))

curve(dt(x,df=25-1), xlim=c(-4,4),col="darkblue", lwd=2, add=TRUE, yaxt="n")

abline(v=0.5606452,col="red",lwd=2)

abline(v=-0.5606452,col="red",lwd=2)So values equal to the red vertical lines or smaller (left side) or bigger (right side) are then used to calculate the p-value which is then used as evidence for rejecting (against) the null hypothesis.

We also use the function t.test to calculate the same two-tailed probability as follows (look into the p-value (p-value = 0.5802):

t.test(heat, mu=98.6)Interpreting the p-value under a statistical hypothesis testing framework:

The p-value assists in building quantitative (statistical) evidence for or against the null hypothesis. Given that the p-value is larger (and quite larger) than the significance value (here we will apply an \(\alpha=0.05\)), we do not reject the null hypothesis.Note that we can’t say that the human body population taught in text books (98.6°F) is true; all we can say is that: based on our sample, we can’t say the contrary, i.e., reject the hypothesis that is indeed 98.6°F. REMEMBER: We build evidence against rejecting the null hypothesis but not for acceptance of the null hypothesis.

Let’s now calculate this probability using our computer approximated t distribution. All we need to estimate the probability of obtaining t scores as extreme or more extreme than -0.5606452 is to calculate the number of standardized values in our sampling distribution that are smaller than the observed t score divided by the total number of sample means we generated (i.e., 1000000):

Prob.t.finite <- length(which(standardized.SampDist.Pop1<= -0.5606452))/number.samples

Prob.t.finite*2Note how the computer-based and the infinite case (i.e., using the calculus-based t distribution p-value = 0.5802) are very similar (in my case, I obtained Prob.t.finite*2 = 0.581096); yours will be slightly different than mine obviously due to chance alone as we used finite sampling. If we were able to run the computer infinite times, the computer generated would had been exactly the same as the infinite sampling case via calculus (i.e., using function pt above)! You get the point by now!

Report instructions

All the information needed for you to solve this problem is in the tutorial.

The parameters we assumed to create the statistical population to then generate the standardized t distribution were a mean of 98.6 and sd=5. Create the t-distribution computationally but using a mean much different than 98.6 and a standard deviation different than 5. Keep sample size as 25 observational units.

Produce code to calculate the probability of obtaining t scores as (in the t-distribution assuming H\(_0\) as true) extreme or more extreme than -0.5606452 (i.e., the same as the one for the sample of 25 temperatures) using your own t-distribution created above, i.e., based on the computation approach. Also put code for plotting the histogram of your own t-distribution.

Answer the following question:

Is there a large difference between the probability generated between the standardized sampling distribution based in your population and the population generated earlier (i.e., mu=98.6 and sigma=5)?

Write some text explaining your answer on the basis of the code and the concepts you have learned above.

# use as many lines as necessary,

# comments always starting with #Submit the RStudio file via Moodle.